Ouster just dropped a sensor that does what the autonomous vehicle industry has spent billions trying to duct-tape together. The company’s Rev8 lidar units capture color images and distance data simultaneously, from the same hardware, eliminating the need for separate cameras and lidar modules bolted onto a vehicle like afterthoughts.

The OS1 Max, Ouster’s automotive-grade variant, reaches 200 meters and shoots 360-degree video while mapping the world in three dimensions. CEO Angus Pacala didn’t mince words with TechCrunch: “For all of human history, it’s been: you buy a lidar sensor, you buy a camera, and you try to make sense of the combination with some higher-level reasoning, and waste an enormous amount of time doing this.”

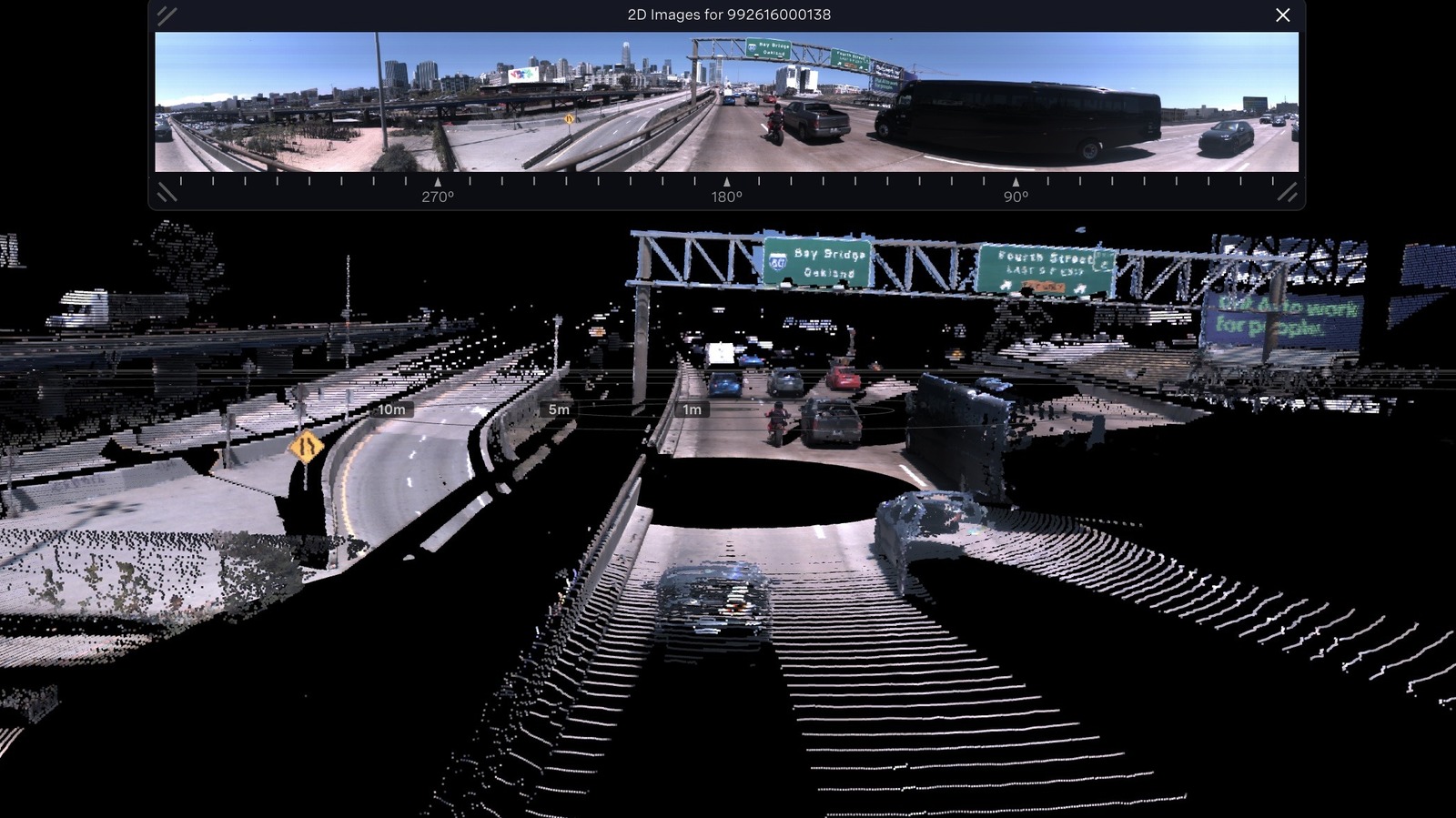

He’s not wrong. Sensor fusion — the process of marrying camera pixels to lidar point clouds — has been one of the most expensive, frustrating bottlenecks in autonomy development. Calibration drifts. Data streams fall out of sync. Engineers burn months aligning two fundamentally different ways of seeing the world. Ouster’s pitch is that none of that matters if one sensor does both jobs natively.

The timing is deliberate. Ouster also announced that its Rev8 sensors are now qualified for NVIDIA’s Drive platform, the same computing backbone Lucid has chosen for its push toward Level 4 autonomy. That’s the tier where a car handles everything and the human becomes cargo. Lucid wants to be the first consumer brand to get there, and Ouster wants to be the eyes that make it happen.

There’s an obvious foil in this story, and it’s Tesla. Elon Musk famously yanked lidar and even radar from Tesla’s sensor suite, betting the farm on cameras alone to cut costs. That gamble has produced years of “Full Self-Driving” that still requires constant human supervision and carries a body of regulatory scrutiny that grows by the quarter. Ouster is walking in the opposite direction — not stripping sensors down, but merging them into something more capable.

The technical trick is elegant. Lidar already fires lasers, which are light. Ouster engineered its sensor to capture reflected color information at megapixel resolution alongside the range data those lasers produce. Same photons, two jobs. On paper, it solves the calibration nightmare because both data streams originate from an identical vantage point at an identical moment. No alignment drift. No fusion guesswork.

But paper specs don’t ship cars. The question nobody at Ouster has answered yet is price. Replacing a camera-and-lidar stack only makes sense if the combined unit costs less than the hardware it eliminates. Ouster hasn’t published pricing, which is never a confidence-inspiring move when your whole value proposition hinges on economic efficiency.

There’s also the durability question. Automotive-grade means surviving years of potholes, temperature swings, salt spray, and the general violence a car endures. A single-point-of-failure sensor that handles both vision and ranging is a bold engineering choice. If it dies, you lose everything at once.

Still, the autonomous driving industry has been stuck in an expensive loop of bolting more hardware onto vehicles and then spending fortunes making it all talk to each other. Ouster is betting that the loop itself is the problem. One sensor, one data stream, one calibration — zero excuses.

Whether automakers buy that argument depends entirely on what’s printed on the invoice. And Ouster isn’t showing that card yet.

Share this Story